Three steps to fixing the data quality issue plaguing the U.S. health care system

The end result will be a health care system that provides better care at a lower cost.

Data quality is essential to improving the U.S. health care system. But what is data quality? Basically, data quality is about extracting value for patients, clinicians and payers. High-quality data is both usable and actionable. In contrast, low-quality data, such as duplicate records, missing patient names, or obsolete information, creates barriers to care delivery and billing/payment while costing money across the health care system through inefficiency.

Unfortunately, health care has lagged virtually every other industry in leveraging data for strategic and operational advantage. This is not for lack of data. More patient health data than ever is being captured today by medical equipment, digital devices and apps. Indeed, if data is gold, health care is sitting on top of a goldmine.

Yet the industry generally has done a poor job of mining and refining this gold ore of data to a point where it can be useful. That is a wasted opportunity because refined, quality data would provide value to all health care stakeholders—hospitals, payers, health information exchanges, labs, and patients.

Impact on research, patient outcomes

Poor data quality has negative ramifications throughout health care. The way we bring new medications to market is a great case in point. The first phase is identifying the medication, a process that comprises less than 20% of the cost of drug development. Next comes clinical trials, which account for the vast majority of drug development costs.

Clinical trials are long and arduous. Worse, from a data quality standpoint, data still are collected on paper and then transferred to computer, and typically not associated with other data sets for similar types of patients or even other patients in that clinical trial. If all this information were easily available and everything was automated, clinical trials would cost less than 10% of what they do now.

I just started participating in a clinical trial and it is amazing how much effort is required just to log in to an older system for a single trial. There may be six to 12 different logins and each requires special access permissions. It can take several days to log in to just one of these systems. All that information under these logins should be united to become quality, actionable information that's easily available.

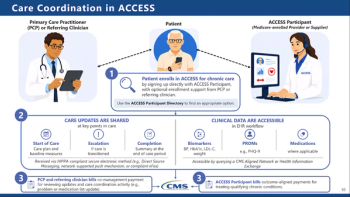

Even in the physician's office there's a significant lack of aggregated data for patients, leaving clinicians knowing only part of a patient’s story. And when a doctor sees a patient at the hospital, that doctor needs the patient’s ambulatory outpatient records to make sound, evidence-based decisions about appropriate treatment. In far too many cases, however, the data that would inform clinicians at the point of care are trapped in siloes scattered across the health care landscape.

Healthcare has not availed itself of advanced digital technologies such as artificial intelligence (AI) and machine learning (ML), which are being used to transform many other industries. In large part this is because its either not accessible or the quality of health data is so poor that intelligent machines would struggle to process and analyze it for actionable insights into patient care.

In contrast, quality health care data creates scenarios in which AI/ML quickly can provide clinicians at the point of care with information that enables them to educate patients about their specific condition, offer referrals to appropriate specialists, suggest new medications, and improve outcomes. With the right data and insights, clinicians also might be able to match the patient with a clinical trial that could save the patient’s life.

Low-quality data is a problem for payers because they need data to make decisions, whatever it’s condition. It starts when the patient gets a diagnosis and the payer may not be aware of it for weeks. So a patient diagnosed with cancer might suddenly have to find a specialist and determine whether the practice takes their insurance.

But if a payer finds out right away that this patient may have cancer, the payer can help guide patients to the right provider, removing a heavy burden of financial concerns and medical management.

Three steps to quality data

There are three main steps to achieving high data quality. The first is ensuring access to the data the clinician needs. There are still many legacy systems in health care where the vast majority of medical records are housed within an organization’s servers and not on a cloud, where they could be more easily accessed and aggregated by authorized users. While health care interoperability and data sharing have improved in recent years, we’re still building integrations one at a time. Bringing data into a central database where it can be turned into gold remains a challenge.

The next step involves identity management. Much of the value in health data comes from its ability to document longitudinal change in individual patients. Clinicians may, for example, want to see how medications or disease processes affect lab results for a particular patient. Even if they could connect disparate data sources and have the data go into a single database, they can't associate the data with a specific patient. With effective identity management, though, it is possible to create a good longitudinal patient record that can bring huge clinical and efficiency benefits.

The third phase of data quality will finally make the data usable or actionable. Once the data is in the right patient's chart and created a longitudinal record, it must be organized and easy for clinicians to locate and read. This requires a process called data normalization. Health data can come from multiple sources (EHRs, labs, pharmacy systems, etc.), all of which may use different coding for a medical procedure, different terms for a certain test, or even use different language to categorize genders. Data normalization creates a common terminology that enables the semantic interoperability necessary to make data actionable.

There’s no magic bullet for improving health care data quality. It will require a joint effort and the innovation inherent in the free market. There will be companies that will help us collect and connect to data and there will be companies and entities that will help us connect to data. There will be technologies that will identify the data and others that will normalize the data so it looks similar—and becomes actionable—no matter where it originated. And there will be companies that provide quality databases that health care stakeholders can use to perform advanced analytics. The end result will be a health care system that provides better care at a lower cost.

Bess is CEO and co-founder of 4medica, which provides clinical data management and health care interoperability software and services.